- read messages from the RabbitMQ service

- interrogate SDS and retrieve full data about the specified datasets in JSON format

- updates the EU Open Data Portal (ODP) using CKAN API

Contents

- https://webgate.acceptance.ec.testa.eu/euodp/en/home

- https://webgate.acceptance.ec.testa.eu/euodp/data/apiodp/action/METHOD_NAME

Start the odpckan client with the following command:

$ sudo docker run -d \

-e RABBITMQ_HOST=http://rabbitmq.apps.eea.europa.eu \

-e RABBITMQ_PORT=5672 \

-e RABBITMQ_USERNAME=client \

-e RABBITMQ_PASSWORD=secret \

-e CKAN_ADDRESS=https://open-data.europa.eu/en/data \

-e CKAN_APIKEY=secret-api-key \

-e SERVICES_SDS=http://semantic.eea.europa.eu/sparql \

-e SDS_TIMEOUT=60 \

-e CKANCLIENT_INTERVAL="0 */3 * * *" \

-e CKANCLIENT_INTERVAL_BULK="0 0 * * 0" \

-e eeacms/odpckan

For development, a docker-compose.yml file is provided. To set extra environment variables, copy docker-compose.override-example.yml to docker-compose.override.yml and customize it.

Dependencies

- Pika a python client for RabbitMQ

- ckanapi a python client for CKAN API to work with ODP

- rdflib a python library for working with RDF

- rdflib-jsonld JSON-LD parser and serializer plugins for RDFLib

Clone the repository:

$ git clone https://github.com/eea/eea.odpckan.git $ cd eea.odpckan

Install all dependencies with pip command:

$ pip install -r requirements.txt

ODP CKAN entry point that will start consume all the messages from the queue and stops after. This command can be setup as a cron job.:

$ python app/ckanclient.py -d $ #debug mode: creates debug files for dataset data from SDS and ODP, before and after the update $ python app/ckanclient.py $ #default/working mode: reads and process all messages from specified queue

Inject test messages (default howmany = 1):

$ python app/proxy.py howmany

Query SDS (default url = https://www.eea.europa.eu/data-and-maps/data/eea-coastline-for-analysis-1) and print result:

$ python app/sdsclient.py -d $ #debug mode: queries SDS and dumps a dataset and all datasets $ python app/sdsclient.py $ #default/working mode: initiate the bulk update

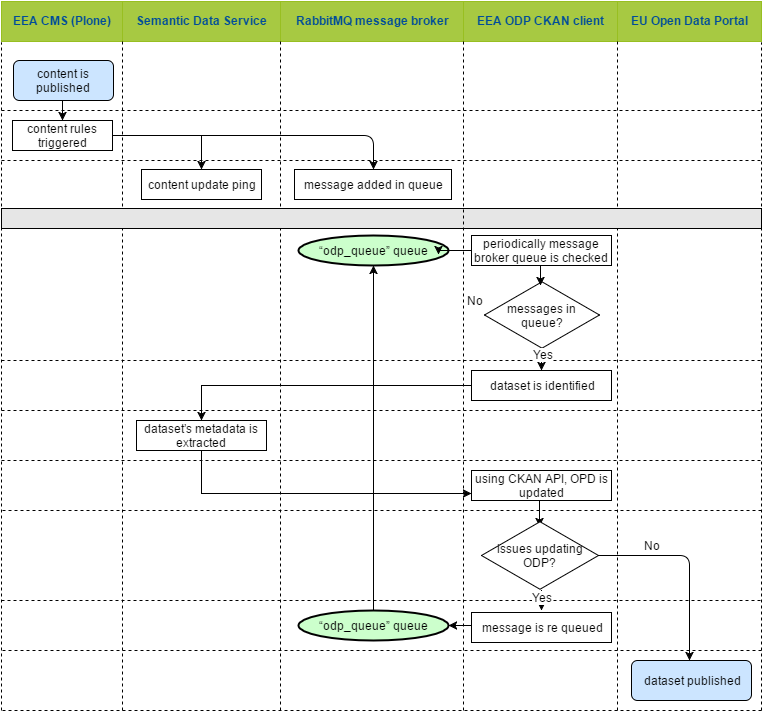

Information published on EEA main portal is submitted to the EU Open Data Portal.

The workflow is described below:

- EEA CMS (Plone)

- content is published

- CMS content rules are triggered and the following operations are performed:

- a message is added in RabbitMQ message broker queue, see example below

- SDS is pinged to update its harvested content

- EEA ODP CKAN client

- CKAN client is triggered periodically via a cron job

- CKAN client connect to RabbitMQ message broker and consumes all the messages from the “odp_queue” queue performing following operations:

- EEA ODP CKAN client (bulk update operation)

- is triggered periodically via a cron job

- it reads all the datasets from the SDS

- generates update messages in the RabbitMQ message broker, one message per dataset found

Message:

$ update|https://www.eea.europa.eu/data-and-maps/data/eea-coastline-for-analysis-1 |eea-coastline-for-analysis-1

Message structure:

$ action|url|identifier

The "identifier" value is ignored, only the URL is used to look up the dataset in SDS.

Action(s):

$ create/update/delete

From the "app" directory, install development requirements, and run pytest:

pip install -r requirements-dev.txt pytest

The tests use pre-recorded responses for SDS queries. To update the responses, run the tests in "spy" mode:

SDS_MOCK_SPY=true pytest

The Initial Owner of the Original Code is European Environment Agency (EEA). All Rights Reserved.

The Original Code is free software; you can redistribute it and/or modify it under the terms of the GNU General Public License as published by the Free Software Foundation; either version 2 of the License, or (at your option) any later version.