(readme still being improved)

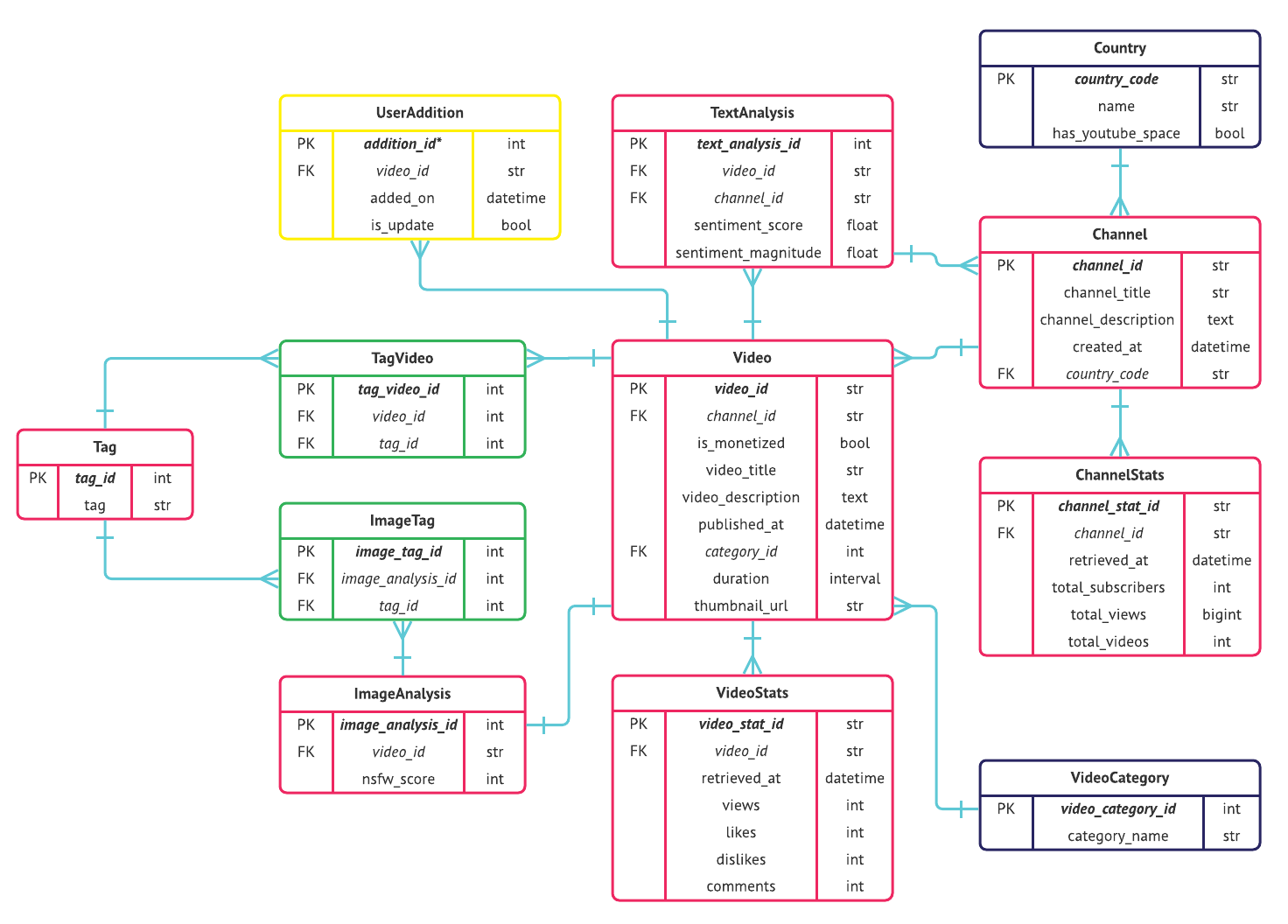

The YouTube Data Collective (YTDC) crowdsources information about YouTube video demonetization, allowing creators to gain third-party visibility into how YouTube's policies are implemented. When users submit new data, YTDC supplements it with data from the YouTube API, conducts thumbnail image analysis with the Clarifai API, sentiment analysis on the text fields with Google Cloud's Natural Language API. YTDC has a custom search engine based on an inverted index where postings are stored in a linked list; results are ranked through tf–idf. D3's Force layout visualizes connections among videos, drawing from an undirected graph implemented with an adjacency list. Another chart allows users to compare video tags and uses a trie for autocomplete. YTDC launched with a seed data set of over 30,000 videos.

I wanted users to be able to look up information for individual channels and videos. I considered Elasticsearch, Whoosh, and PostgreSQL's built-in text search but ultimately decided to start from scratch (all the better to learn). I hunkered down with the highly readable "Intro to Information Retrieval". And thanks to Rebecca Weiss and Christian S. Perone for creating easy-to-understand and sufficiently advanced online material to teach me enough linear algebra to fully understand the vector operations behind tf-idf.

I wanted users to be able to compare demonetization percentages by video tag. The example below shows that videos tagged "Hillary Clinton" are more likely to be demonetized than those tagged "Donald Trump." (As noted above, a biased sample selection process and limitations in scraping make it impossible to infer anything meaningful from this data. But it is fun to look at.) I modeled the user experience on Google Trends.

Henry, a Hackbright instructor, encouraged me to implement autocomplete by looking into tries (pronounced like "try" with an s; I only say this because two MIT computer science grads pronounced it incorrectly—one thought it was "tree" and the other thought it was "tree-ay").

- Create classes for the

Trieobject with anadd_wordsmethod and aTrieNodeobject with anadd_childmethod and afrequencyattribute for how many videos have used a particular tag. A particular sequence of characters constitutes a word (or tag) if itsfrequencyis not 0 (the higher the frequency, the more common it is). - When seeding the database, create two tries: one with every single tag (there ended up being some 150,000+ unique video tags) and another for tags with more than three occurrences (this pared it down to 16,000 unique video tags after culling ones like "christmas pond decorations" and "lebron invented barbershop").

- Pickle both tries and store in memory so they don't have to be regenerated every time.

- When a user visits

tags.html(on pageload): (a) client sends an AJAX request for the most up-to-date tag trie to server; (b) server unpickles the concise tag trie, a dictionary (where key = character, value = another dictionary containing information on frequency and children); (c) server sends client a jsonified version of the trie. - As a user starts typing, a keyup JavaScript event triggers searches for all tags that begin with the typed input (see

getAllWordsfunction below) and orders them by frequency, displaying the most relevant to the user. 😲 The fact that all of this happens so quickly (16,000 tags!) literally amazed me. 😲

- I like data structures

- There are myriad and obscure ways that APIs can throw curveballs

- It's amazing that Google is able to show me relevant results in way less than a second

- Linear algebra

- I'm so, so excited for my new life as a software engineer

I'm not deploying ytdc because it has way too many bugs, and I have too many other projects I want to work on. If I were to improve my app, I'd do the following:

- 100% test coverage

- Enhance search functionality by:

- Incorporating video/channel tags and descriptions into the inverted index (the resulting index was too large for to pickle in memory for my computer despite increasing the maximum recursion)

- Use a positional index in order to enable phrase queries, though this would increase space complexity

- Use a B-tree and reverse B-tree to enable wildcard queries (e.g., words that start with "q" or end in "x")

- Increase search efficiency by:

- Implementing a priority queue with a heap to reduce time complexity to

O(log n)

- Implementing a priority queue with a heap to reduce time complexity to

- Use AJAX to implement infinite scroll

- Languages: Python, JavaScript, HTML/CSS

- Frameworks: SQLAlchemy, PostgreSQL, Flask/Jinja, jQuery, Bootstrap

- Libraries: D3, Chart.js, Natural Language Toolkit (NLTK), NumPy

- APIs: YouTube Data API, Clarifai for image analysis, and Google Cloud's Natural Language Processing for sentiment analysis.

I wouldn't have been able to do this without the support of my family—especially my mom and my youngest brother Hyuckin/David (who helped me build @cooobot, my gateway to coding), as well as Youngin and Jungin/Sarah, whose love and support gave me the confidence to dive into this very new adventure. Much love and thanks to my best friend and roommate Hannah, who's known and supported me since freshman year of college, as well as her sister Rachel, whom I used to describe as the "only woman engineer I know."

Note: The color scheme is actually Teespring's rebrand colors

Also, I stumbled upon a computational linguistics class taught by one Chris Callison-Burch at UPenn. Working through the vector space model assignment (week 3) reminded me that I deeply enjoy (and am good at!) math. I'm excited to continue to work through the syllabus.

Another shoutout to Christopher Manning / Prabhakar Raghavan / Hinrich Schütze / Stanford (and maybe Cambridge University Press?) for allowing the highly readable "Intro to Information Retrieval" textbook to exist online for free.

(for so many people, coming soon!)