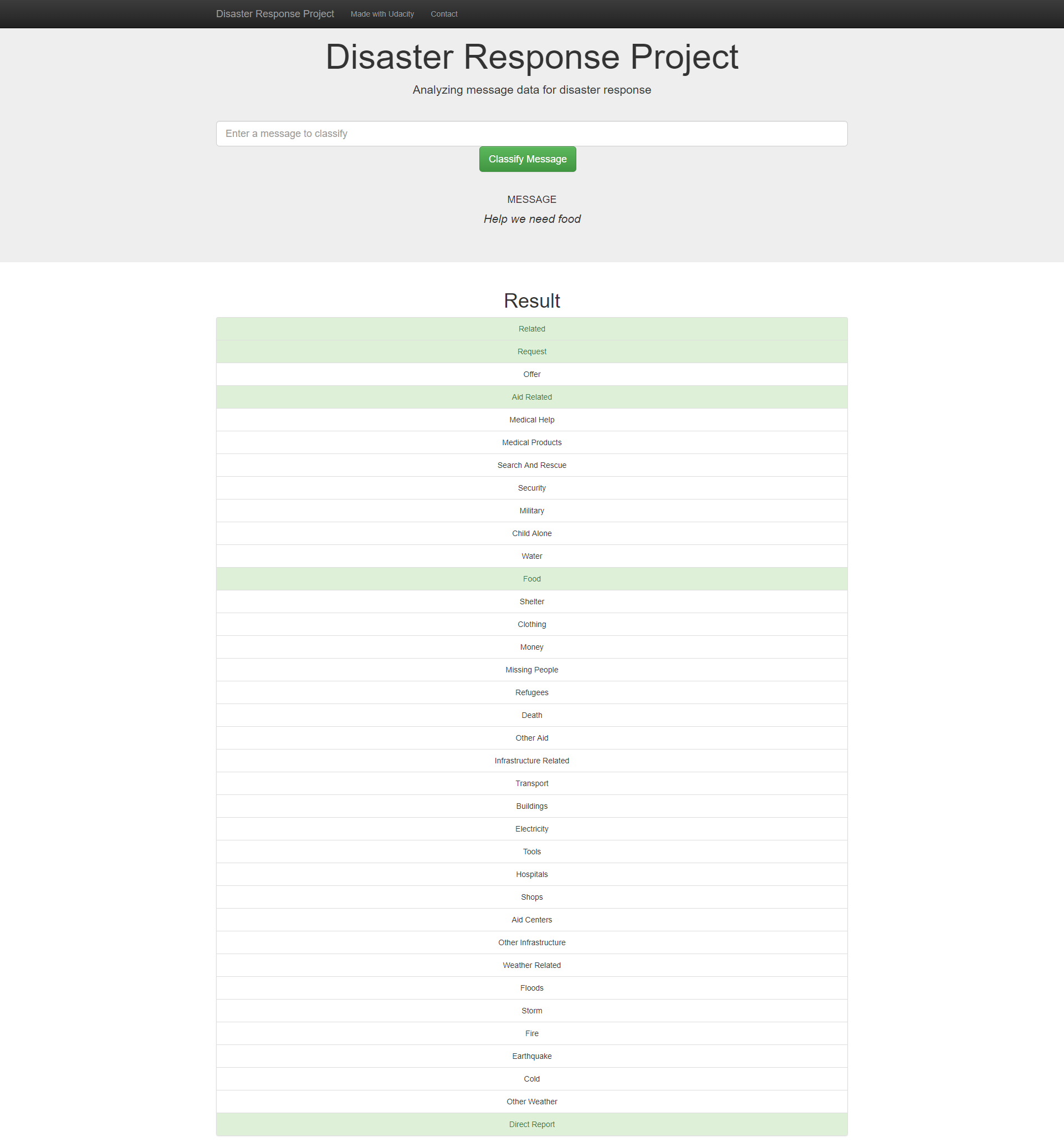

The goal of this project is to use supervised learning techniques to classify messages during disaster response campaigns. The data that Figure Eight provided was used to train the machine learning model. A flask application was built to classify new messages and display the results. Some extra graphs were included to show the contents of the data provided.

The project contains the following files

.

├── Notebook Files

├── DRData.db

├── ETL Pipeline Preparation.ipynb

├── ML Pipeline Preparation.ipynb

└── classifier.pkl

├── app

│ ├── templates

│ ├── go.html # Classification result page of web app

│ └── master.html # Main page of web app

│ └── run.py # Flask file that runs app

├── data

│ ├── DisasterResponse.db # Database to save clean data to

│ ├── disaster_categories.csv # Categories data to process

│ ├── disaster_messages.csv # Messages data to process

│ └── process_data.py # ETL pipeline script to clean data

├── models

│ ├── classifier.pkl # Saved Model

│ └── train_classifier.py # Train ML model

├── LICENSE

└── README.md

-

Clone GIT repository

-

Run the following commands in the project's root directory to set up your database and model.

- To run ETL pipeline that cleans data and stores in database

python data/process_data.py data/disaster_messages.csv data/disaster_categories.csv data/DisasterResponse.db - To run ML pipeline that trains classifier and saves

python models/train_classifier.py data/DisasterResponse.db models/classifier.pkl

- To run ETL pipeline that cleans data and stores in database

-

Run the following command in the app's directory to run your web app. (Step 2 can be skipped if you wish to use the pretrained model)

python run.py

-

Go to http://127.0.0.1:3001/