Development and maintenance of this repo/package has ended. For the latest generation of LLAMA, see https://github.com/dropbox/llama

L.L.A.M.A. is a deployable service which artificially produces traffic for measuring network performance between endpoints.

LLAMA uses UDP socket level operations to support multiple QoS classes. UDP datagrams are fast, efficient, and will hash across ECMP paths in large networks to uncover faults and erring interfaces. LLAMA is written in pure Python for maintainability.

LLAMA will eventually have all those capabilities, but not yet. For

instance, there it does not currently provide QOS functionality,

but will send test traffic using hping3 or a built-in UDP library

It’s currently being tested in Alpha at Dropbox through experimental

correlation.

- LLAMA on ReadTheDocs

- Changelog

- See Issues for TODOs and Bugs

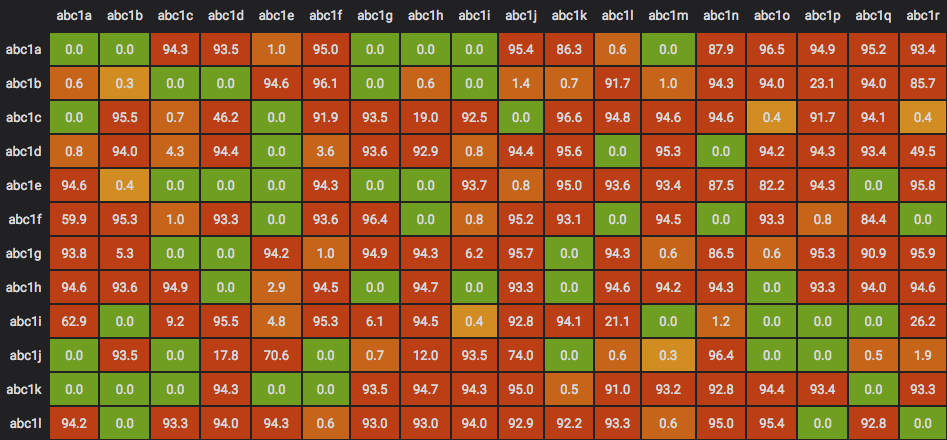

Using InfluxDB and interested in visualizing LLAMA data in a matrix-like UI?

Check out https://github.com/dropbox/grallama-panel

- Inspired by: https://www.youtube.com/watch?v=N0lZrJVdI9A * with slides: https://www.nanog.org/sites/default/files/Lapukhov_Move_Fast_Unbreak.pdf

- Concepts borrowed from: https://github.com/facebook/UdpPinger/