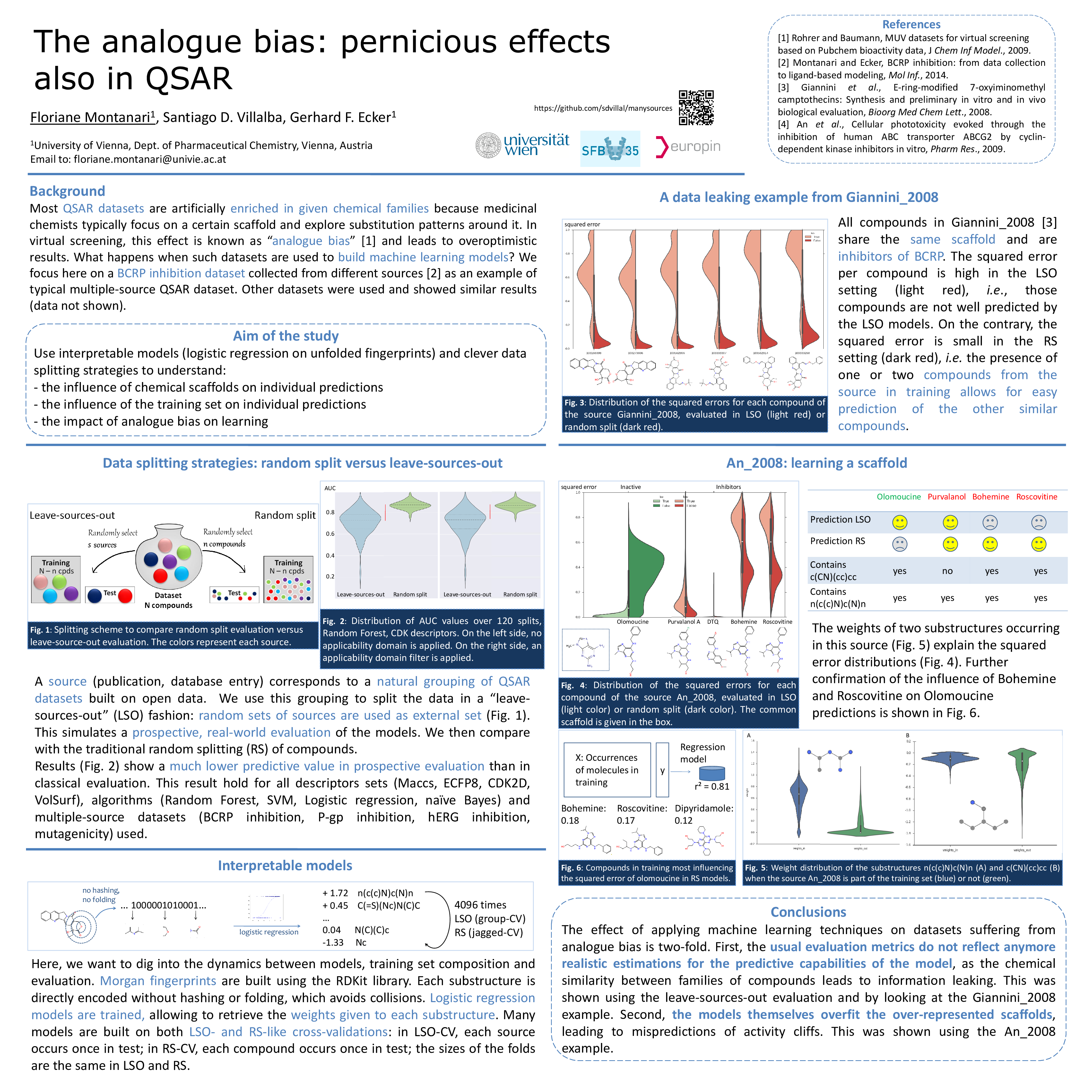

We are revisiting through theory, discussion and (not so) anecdotical evidence several issues that arise when appliying statistical learning1 over chemical datasets with scaffold overrepresentation, a problem commonly called analog bias in the cheminformatics literature.

Because of the way chemical collections are usually constructed (by exploring substitutions around scaffolds of interest), the way molecules are often represented when fed to statistical models (1D and 2D descriptors dominate the academic literature) and the way these models work (learning repeated discriminative or correlative patterns), two problems pervade models built using the average chemical dataset:

* Overoptimistic evaluation inflated performance estimates irrelevant for generalisation

* Scaffold overfitting models that give too much importance to overrepresented features and miss what often is more interesting, activity cliffs

The tools in this repository are useful on their own too. Some highlights:

- We use unfolded, unhashed fingerprints.

- Pros: good for model interpretability and avoiding hash clashes effects. They usually provide elevated performance2

- Cons: no more regularisation by hashing, one needs a model that scales well with very high dimensionality

- We provide a general framework to understand individual molecule predictions in the context of a concrete dataset.

- Linking to feature importance and assessing too the influence of other molecules and selecting influential molecules.

- Providing quantitative and qualitative insight in the warts of evaluation and the whys of predcitions

- Can be extended to provide different hypothesis for individual molecules prediction on model deployment time.

We include several of the datasets we use in our study on this repository (see data).

- Use manysources/datasets.py for feature extraction.

* Use manysources/experiments.py to generate new results. These build and evaluate models for many different data partitions (note that we run these for a few hours in something like 30 parallel jobs).

- Use manysources/hub-py to easily link everything, from molecules to features to model to prediction and back.

- There are some example analysis in manysources/analyses.

We recommend using the anaconda python scientific distribution to install manysources and its dependencies. Dependencies are in setup.py. So assuming we are using a conda environment, these commands install the required software:

conda install numpy scipy pandas h5py matplotlib seaborn joblib scikit-learn cytoolz networkx numba

conda install -c rdkit rdkit

pip install whatami tsne arghTo install manysources itself there are a few options:

- download a zip file or clone the manysources repository and then tweak $PYTHONPATH or use pip install -e

...or...

- pip install git+git://github.com/sdvillal/manysources.git

Proper releases are coming (at least there will be one when a publication happens).

This is the code we (Floriane, Santi) are using in our experiments. A documented, stable and more featureful release is happening in 2015 Q4. In the meantime, feel free to peek around the code, it is not too bad!